In the fast-moving ecommerce landscape of 2026, scraping ecommerce data is more critical than ever. Businesses that still rely on manual tracking risk falling behind because their systems cannot keep up with constant price changes, shifting rankings, and evolving consumer trends. This guide explains how to scrape ecommerce data effectively so your data strategy can keep pace with the market.

What Does It Mean to Scrape Ecommerce Data?

To scrape ecommerce data simply means automatically extracting structured information from ecommerce websites and marketplaces. This data can include product listings, pricing, reviews, seller performance, or search rankings, which are then stored in databases or data pipelines for further analysis.

What matters more in 2026 is not the act of collecting data itself, but what happens after. Scraping ecommerce data has evolved into a foundation for building real-time decision systems, where data flows continuously and supports pricing strategies, competitor monitoring, and market intelligence.

Why Scrape Ecommerce Data in 2026

Ecommerce today operates at a pace that manual processes simply cannot match. Platforms like Shopee, TikTok Shop, or Amazon constantly update product visibility, pricing structures, and consumer signals. What appears as a stable listing in the morning may look completely different by the end of the day.

Relying on manual tracking in this environment creates blind spots. Teams may think they understand the market, but in reality, they are always reacting too late. Static reports provide a snapshot, not a moving picture. By the time insights are generated, the opportunity has already passed.

This is where scraping ecommerce data becomes critical. It allows businesses to continuously monitor the market, detect changes as they happen, and respond with precision. Instead of guessing trends or reacting to competitors, companies can operate with a real-time understanding of their landscape. The difference is subtle but powerful: it shifts decision-making from reactive to proactive.

What Ecommerce Data Can You Scrape?

When businesses begin to scrape ecommerce data, they often realize the scope is much broader than expected. The value lies not just in collecting data, but in understanding how each type contributes to decision-making. A structured view makes this clearer:

| Data Type | What You Can Extract | Business Value |

| Product Data | Product names, descriptions, categories, images, variations | Catalog optimization, product benchmarking |

| Pricing Data | Current prices, discounts, and historical changes | Price intelligence, dynamic pricing strategies |

| Customer Data | Reviews, ratings, sentiment signals | Customer insights, product improvement |

| Seller & Competitor Data | Seller info, product volume, performance metrics | Competitor tracking, market positioning |

| Search & Ranking Data | Keyword rankings, search trends, and trending products | Demand analysis, product research |

Looking at this holistically, it becomes clear that scraping ecommerce data is not about isolated datasets. It is about connecting multiple layers of information to understand how a market behaves in real time.

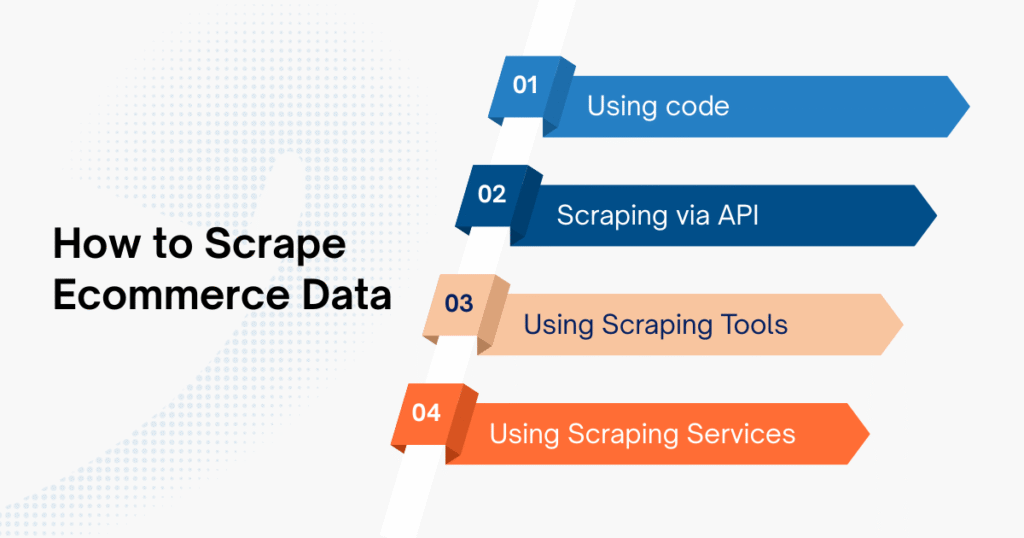

How to Scrape Ecommerce Data

There is no universal method that works for every team. The right approach depends on your technical capabilities, the scale you are targeting, and the criticality of the data to your operations. Choosing the wrong method early can lead to wasted time, unstable systems, or unnecessary costs.

Broadly speaking, there are four main ways to scrape ecommerce data, each with its own trade-offs.

| Method | Skill Level | Scalability | Best Use Case |

| Code | High | High | Custom, developer-driven systems |

| API | Medium | High | Structured and stable data sources |

| Tools | Low | Medium | Fast deployment, non-technical teams |

| Service | None | High | Business-focused, large-scale needs |

In practice, many teams start with code or tools, but as their data requirements grow, they often shift to more scalable, managed solutions. Speed and reliability tend to outweigh full control in most real-world scenarios.

Method 1 – Scrape Ecommerce Data with Code

Using code is the most traditional and flexible way to scrape ecommerce data. Developers typically rely on Python libraries such as BeautifulSoup or Selenium, or JavaScript tools like Puppeteer and Playwright to extract and process data directly from websites.

This approach gives you full control over how data is collected and structured. However, that control comes with responsibility. Ecommerce platforms frequently update their layouts, implement anti-bot protections, and load content dynamically. Maintaining a scraping system under these conditions requires continuous monitoring and technical expertise.

For a more hands-on walkthrough, you can learn how to scrape ecommerce website step by step using Python-based approaches.

For teams with strong engineering resources, this method can be powerful. For others, it often becomes difficult to sustain over time.

Method 2 – Scrape Ecommerce Data via API

APIs offer a cleaner and more structured way to scrape ecommerce data, especially when platforms provide official access. In some cases, teams also reverse-engineer APIs to retrieve data more efficiently than traditional scraping.

The main advantage here is stability. Data comes in a structured format, making it easier to process and integrate into existing systems. However, APIs often come with limitations, such as rate limits, restricted endpoints, or incomplete datasets.

This method works best when reliable API access is available and aligns with your data needs.

Method 3 – Use Ecommerce Scraping Tools

For many teams, the fastest way to scrape ecommerce data is to use off-the-shelf tools rather than build systems from scratch. These platforms allow users to extract data through visual interfaces or pre-built templates, without requiring programming skills.

Tools like Octoparse, ParseHub, WebHarvy, and Import.io are commonly used in this category. They allow users to point, click, and configure extraction rules without writing code.

The main advantage of this approach is speed. Teams can start collecting data within hours instead of weeks, which is especially useful when validating a use case or running short-term analysis. These tools often come with built-in scheduling, export options, and basic automation features.

However, as data needs grow, limitations begin to surface. Complex websites, large-scale scraping, or real-time requirements can push these tools beyond their intended use. In those cases, businesses often need to combine tools with APIs or transition to more robust solutions.

Method 4 – Use Ecommerce Data Scraping Services

As data needs grow, many businesses move toward managed services. Instead of maintaining infrastructure internally, they partner with providers such as Easy Data to handle the entire data pipeline.

This approach is particularly effective for companies operating in complex markets such as Southeast Asia, where platforms like Shopee and TikTok Shop require localized expertise. Services can deliver real-time datasets, custom scraping setups, and access tailored to specific business use cases.

The key advantage here is focus. Instead of investing resources into building and maintaining scraping systems, teams can concentrate on using data to drive decisions.

Best Tools to Scrape Ecommerce Data in 2026

If you’re looking for a fast way to scrape ecommerce data without building infrastructure, off-the-shelf tools are often the starting point. These platforms are designed to simplify data extraction and reduce technical overhead, especially for teams without strong engineering resources. Some widely used tools include:

- Octoparse: A no-code tool with a visual interface, suitable for extracting product listings and pricing data quickly.

- ParseHub: Handles dynamic websites well and supports more complex workflows compared to basic tools.

- WebHarvy: Focuses on point-and-click extraction, making it easy to collect structured ecommerce data.

- Import.io: More enterprise-oriented, turning web data into structured datasets and APIs.

- Diffbot: Uses AI to automatically understand and extract structured data from web pages at scale.

- Browse AI: Allows users to train bots to monitor and extract data from websites without coding.

These tools lower the barrier to entry and are effective for small to mid-scale use cases, such as quick data collection, validation, or internal analysis.

If you want a deeper comparison of features, use cases, and selection criteria, check out a full guide to ecommerce scraping tools.

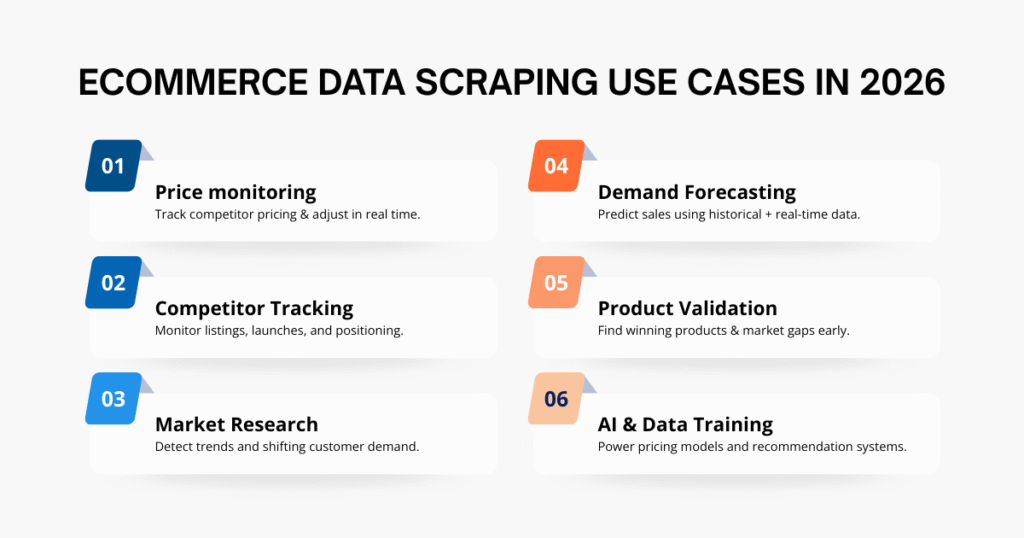

Ecommerce Data Scraping Use Cases

The true value of scraping only becomes clear when it is applied to real business problems. When companies begin to scrape ecommerce data consistently, they unlock a range of use cases that directly impact growth, pricing strategy, and market positioning.

- Price monitoring: Continuously track competitor pricing, discounts, and price fluctuations to adjust your own pricing strategy in real time and stay competitive.

- Competitor tracking: Monitor competitor product listings, launches, and performance signals to understand how they position themselves and where opportunities exist.

- Market research: Analyze large volumes of product, pricing, and search data to identify emerging trends and shifting consumer demand across marketplaces.

- Demand forecasting: Combine historical and real-time data to more accurately predict product demand, helping optimize inventory and marketing strategies.

- Product research & validation: Identify high-performing products, market gaps, and potential opportunities before launching or expanding product lines.

- AI & data training: Use structured datasets generated from scraping to train recommendation systems, pricing models, or other machine learning applications.

Businesses don’t just scrape ecommerce data to collect information; they do it to build systems that support faster, more informed decisions across the entire ecommerce lifecycle.

Challenges When You Scrape Ecommerce Data

At a small scale, scraping may seem straightforward. But as soon as you try to scale, challenges begin to surface.

Websites implement anti-bot systems that detect unusual traffic patterns. IP addresses may be blocked, requiring proxy rotation. Many platforms use dynamic content loading, which complicates data extraction. On top of that, legal considerations vary depending on the platform and region.

These challenges are not just technical obstacles. They are the main reason why many scraping projects fail after initial success. Without the right infrastructure and strategy, maintaining consistent data flow becomes increasingly difficult.

How to Scrape Ecommerce Data at Scale

Scaling is where most teams encounter real complexity. Collecting data from a few pages is manageable, but scraping thousands or millions of listings requires a completely different approach.

At scale, scraping becomes an infrastructure problem. It involves automated pipelines, distributed systems, proxy management, and continuous data cleaning. Each component must work reliably to ensure data accuracy and consistency.

This is why many organizations eventually move away from purely internal solutions. The cost of maintaining large-scale scraping systems often outweighs the benefits, especially when specialized providers can deliver the same results more efficiently.

Ecommerce Data Scraping Service

At some point, the question shifts from “How do we scrape ecommerce data?” to “Should we build or outsource this?” There is no universal answer, but the decision usually depends on how critical data is to your business and how quickly you need it. For companies operating across marketplaces like Shopee, TikTok Shop, or Lazada, having reliable and real-time data is often non-negotiable.

Working with providers like Easy Data allows teams to access customized datasets and scalable infrastructure without investing months into development. More importantly, it enables them to focus on what actually matters: turning data into actionable insights.

If your data requirements are already slowing down decisions or creating blind spots, it’s a clear signal that you’ve outgrown basic tools, and it may be time to move toward a dedicated ecommerce data scraping service, especially in fast-moving markets like Southeast Asia.

Conclusion

To scrape ecommerce data in 2026 is not just about extracting information from websites. It is about building a system that allows your business to see the market clearly and respond faster than competitors. The companies that succeed are not necessarily those with the most data, but those that can collect it efficiently, process it effectively, and translate it into decisions.

Whether you choose to build your own solution, use tools, or partner with a service provider, the objective remains the same: transform raw ecommerce data into a sustainable competitive advantage.

Leave a Reply