In fast-moving ecommerce markets, data accuracy determines pricing power, campaign timing, and expansion strategy. Yet many teams underestimate how fragile scraping systems can be. Hiring the right web scraping expert is often the difference between scalable competitive intelligence and months of unstable, unreliable data.

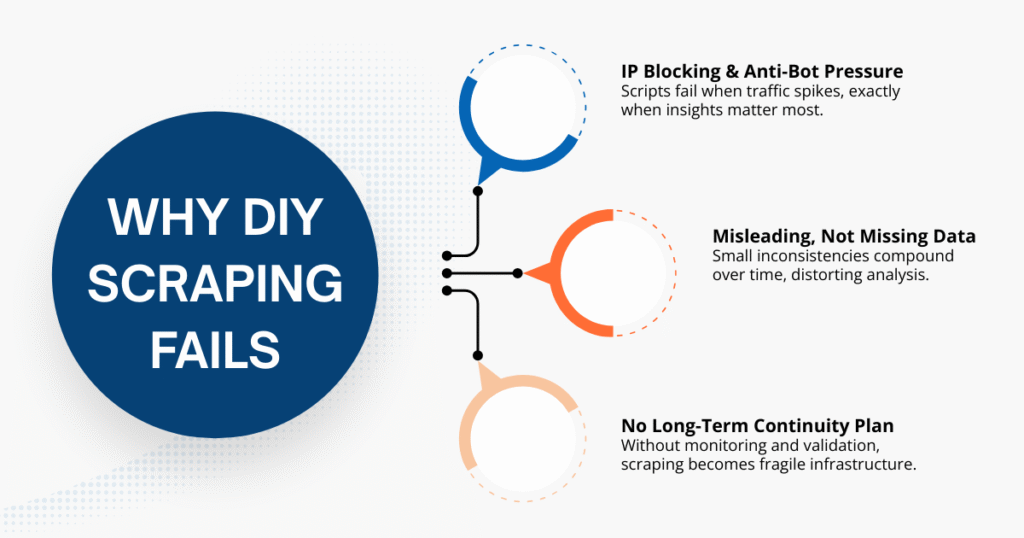

Why Most E-commerce Businesses Fail with DIY Scraping

Many e-commerce teams start with a simple script and high expectations. In the first few weeks, everything ran smoothly: data flowed in, reports looked clean, and dashboards updated as expected. The real problems rarely appear early. They surface later, when SKU volume increases, during campaign season, or after a marketplace layout update.

IP Blocking and Anti-Bot Detection

Marketplace platforms constantly strengthen anti-bot systems. Without proper proxy rotation, browser fingerprint management, and realistic request behavior, scraping scripts eventually get flagged.

What makes this especially dangerous is timing. Failures often occur during high-traffic campaign periods (exactly when pricing data matters most).

Incomplete or Poorly Formatted Data

One of the most common issues is not missing data, but misleading data.

During campaigns, price formats may shift; SKU identifiers can change; sellers merge, duplicate or alter display names. At first glance, everything looks complete. But small inconsistencies compound over time.

Without strong normalization logic, datasets gradually lose reliability, even though the scraper technically “works”.

No Long-Term Maintenance Plan

A scraping script can work perfectly until a layout update or campaign change silently breaks key fields. Most DIY setups lack monitoring, version control, or validation checks, so issues aren’t obvious at first. The data still flows, but its reliability slowly erodes.

An experienced web scraping expert focuses not just on extraction, but on continuity (ensuring the system stays accurate as platforms evolve). Because once business decisions rely on scraped data, scraping stops being a side project and becomes part of your operational backbone.

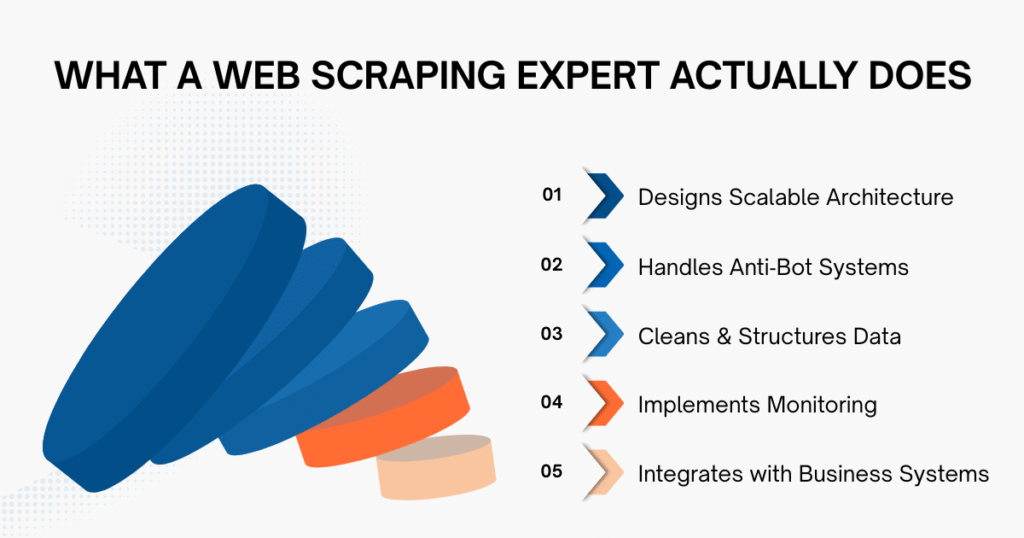

What Does a Web Scraping Expert Actually Do?

If you ask five different developers what scraping involves, you may get five different answers. In ecommerce environments, however, the role goes far beyond writing extraction scripts. A capable web scraping expert designs systems that withstand volatility rather than reacting to it.

Design Scraping Architecture

Before writing a single line of code, architecture decisions must be made. Which fields truly matter for decision-making? How should SKUs be mapped across marketplaces? How frequently should data be refreshed without triggering detection?

Strong architecture prevents downstream chaos. Weak architecture guarantees rework.

Handle Anti-Bot Systems

An experienced web scraping expert understands:

- IP rotation frameworks

- CAPTCHA mitigation strategies

- Rate-limit avoidance

- Headless browser detection bypass techniques

Without this depth, scraping may function at small scale but fail when volume increases.

Data Cleaning & Structuring

Extraction is only the beginning. In real-world web scraping for ecommerce, value emerges during cleaning and structuring.

- Price formats must be standardized

- Duplicate listings removed

- Campaign discounts are separated from base prices

- Seller identities reconciled across pages

Large datasets are meaningless without structured consistency and strong data governance practices. The true strength of a web scraping expert lies in transforming raw HTML into reliable analytical input.

Ongoing Monitoring

Markets change constantly. Layouts evolve. Campaign tags appear and disappear.

Rather than relying on manual checks, a mature scraping system includes alert mechanisms, error logging, and historical comparison logic. Without monitoring, silent corruption can distort pricing benchmarks for weeks before anyone notices.

Integration with Internal Systems

Scraped data should not live in spreadsheets. It needs to flow directly into BI dashboards, pricing engines, and inventory planning tools.

If teams must manually clean or reconcile extracted data, the operational efficiency promised by scraping quickly disappears. A strong web scraping expert builds systems that connect seamlessly with how your business already operates.

In practice, this role is closer to data infrastructure engineering than simple script development.

Freelancer vs Agency vs In-House Developer

Choosing the right model is as important as choosing the right individual. Below is a practical comparison framework:

| Criteria | Freelancer | Agency | In-House Developer |

| Cost | Low–Medium | Medium–High | High (salary + overhead) |

| Scalability | Limited | High | Medium |

| Maintenance | Often reactive | Structured | Depends on the team |

| Risk Level | Medium–High | Lower (if reputable) | Medium |

| Long-Term Reliability | Variable | High (with SLA) | High (if retained) |

Looking at the comparison, the biggest difference isn’t price, it’s risk distribution.

- Freelancers are often suitable for defined, short-term projects.

- Agencies typically bring structured monitoring, backup resources, and scalability (particularly valuable when scraping spans multiple marketplaces).

- In-house developers provide control, but they also require long-term retention and documentation discipline to avoid knowledge silos.

The right decision depends on how central scraped data is to your business. If pricing adjustments, category expansion, or competitive monitoring depend on this data weekly, reliability should carry more weight than short-term savings.

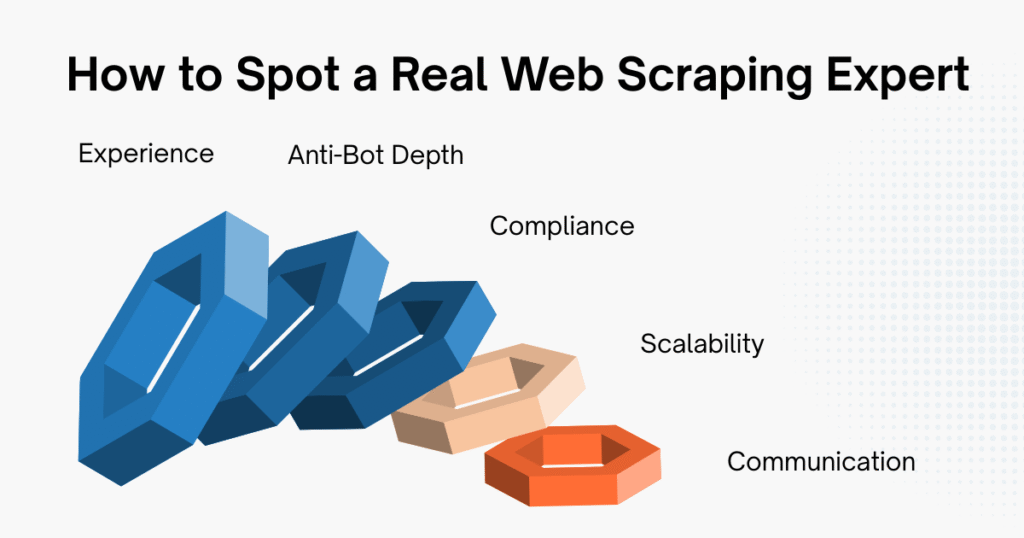

5 Tips to Find the Best Web Scraping Expert

Now that expectations are clearer, here are five criteria that separate a capable web scraping expert from a generic developer.

1. Proven Experience in E-commerce Platforms

Marketplace scraping behaves very differently from scraping static corporate websites: campaign cycles distort pricing; rankings fluctuate daily and seller inventories change rapidly.

Rather than simply asking whether someone has scraped before, explore where they’ve worked. Have they handled Amazon, Shopee, Lazada, or TikTok Shop environments? Do they understand flash sale mechanics and seller duplication issues?

Hands-on ecommerce exposure often matters more than theoretical scraping knowledge.

2. Technical Depth (Anti-Detection Strategy)

Ask specific questions:

- How do you handle IP rotation?

- What happens if CAPTCHA frequency increases?

- How do you detect silent parsing errors?

A confident web scraping expert explains methodology, not vague promises.

3. Legal Awareness (Especially in SEA Markets)

Southeast Asian marketplaces have unique compliance sensitivities. A web scraping expert should understand:

- Terms of service boundaries

- Ethical scraping practices

- Data usage restrictions

Ignoring compliance can create legal and reputational risks.

4. Ability to Scale

Scaling from 100 SKUs to 50,000 is not simply increasing frequency. It alters scheduling logic, storage design, monitoring requirements, and validation complexity.

Ask how historical data is stored, how layout changes are tracked, and how performance is maintained under higher volume. A professional web scraping expert thinks about scale early. Hobbyists think about it only after problems appear.

5. Clear Communication & Documentation

Scraping systems evolve. Marketplaces update. Business priorities shift. Without documentation, knowledge becomes dependent on individuals. Maintenance becomes fragile. Risk increases quietly over time.

A strong web scraping expert communicates technical decisions in plain language and documents architecture, normalization logic, and monitoring processes clearly. Transparency reduces dependency and strengthens long-term reliability.

Red Flags to Avoid

Certain warning signs repeat across failed projects.

Claims of “guaranteed unblockable scraping” are unrealistic; no system is immune to platform defenses. Similarly, if compliance is never discussed or if monitoring and validation processes are absent from the proposal, the engagement is likely focused only on extraction.

Extraction alone is rarely enough for ecommerce decision-making.

Why Custom Scraping Matters More Than Generic Tools

Generic scraping tools are often sufficient for:

- Small-scale experiments

- Educational demos

- Limited SKU tracking

But in real ecommerce operations, volatility changes the equation.

As campaign cycles intensify and seller dynamics shift, many ecommerce teams realize that maintaining internal scripts becomes more complex than expected. That’s often the point where businesses move from isolated tools to structured ecommerce data scraping services built for stability rather than short-term extraction.

Across Southeast Asia, Easy Data has seen this pattern repeatedly (especially on fast-moving platforms). That experience shaped how Easy Data designs dedicated Shopee data scraping services and multi-marketplace data frameworks focused on normalization discipline, detection resilience, and continuous monitoring.

The objective is straightforward: help SEA partners maintain consistent, decision-ready data as marketplaces evolve.

Final Thoughts

Hiring a web scraping expert is not about outsourcing code. It is about securing a reliable data foundation that supports pricing decisions, category expansion, and competitive monitoring.

The right expert designs for volatility.

The wrong one designs for today.

If scraping data influences real revenue decisions in your organization, choose expertise that treats data as infrastructure, not as a side project.

Leave a Reply