In contemporary e-commerce settings, decisions are increasingly influenced by external market signals. Much of this information is publicly available on websites, but transforming it into structured insights requires more than simple data collection. Understanding web scraping data (how it is extracted, processed, and transformed into usable datasets) is essential for businesses that rely on competitive intelligence.

What Is Web Scraping Data?

Web scraping data refers to structured information extracted from publicly accessible web pages through automated tools or scripts. Instead of manually copying information from hundreds or thousands of pages, scraping systems programmatically collect the data and convert it into machine-readable formats.

The concept itself is simple, but its business value lies in scale. A single product page might contain dozens of useful fields: product title, price, seller information, ratings, reviews, and stock availability. Multiply that across tens of thousands of listings on marketplaces like Shopee, Lazada, TikTok Shop, or Amazon, and the resulting dataset becomes a powerful source of market intelligence.

In practice, web scraping data typically includes information such as:

- Product names and descriptions

- Current prices and promotional discounts

- Seller ratings and store metadata

- Customer review counts and sentiment indicators

- Inventory status or stock availability

- Category rankings or search result positions

For e-commerce teams, this type of data enables continuous monitoring of competitor strategies and market dynamics. Instead of relying on occasional manual checks, companies can build automated datasets that update daily or even hourly.

This shift (from sporadic observation to systematic data collection) is what makes web scraping data increasingly valuable in modern web scraping for ecommerce strategies.

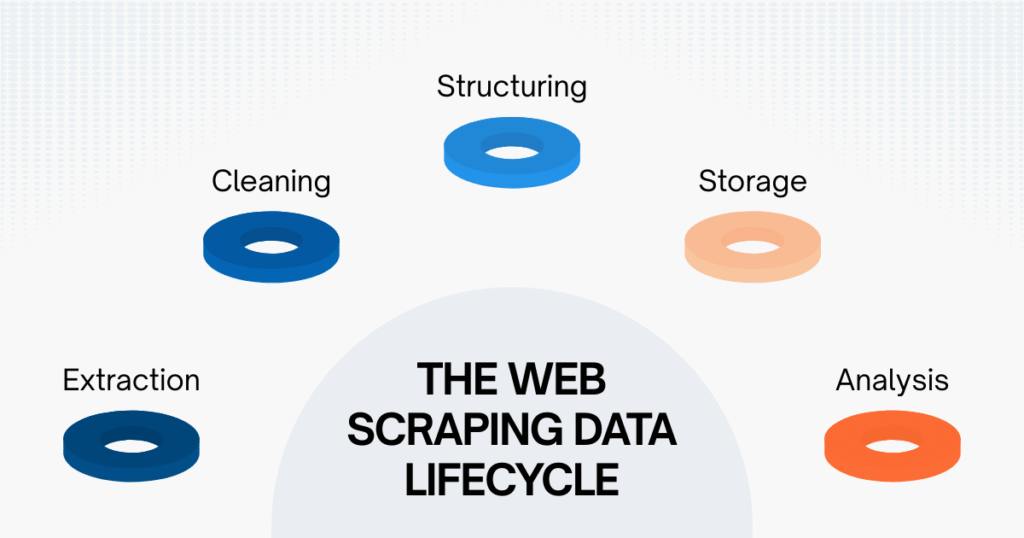

The Web Scraping Data Lifecycle

Collecting data from websites is only the first step. In real-world systems, web scraping data moves through several stages before it becomes useful for analysis or business decisions.

Understanding this lifecycle helps explain why reliable scraping projects require more than just extraction scripts but also structured data pipelines that manage processing and validation.

Extraction

Extraction is the process most people associate with web scraping. At this stage, automated tools access web pages and retrieve the relevant HTML elements containing the target information. For example, an ecommerce scraper might collect:

- Product title

- Product price

- Review count

- Seller name

However, raw HTML rarely comes in a perfectly structured format. Each marketplace may display data differently, and page layouts often change during promotional campaigns. As a result, extraction is only the beginning of the web scraping data pipeline.

Cleaning

Once data is extracted, it usually contains inconsistencies that must be corrected before analysis. A simple price field illustrates the issue. On different pages, the same price might appear as:

- $12.50

- 12.50 USD

- $12.50 – $14.00

- Discounted: $10.99

Without cleaning rules, these variations would make automated analysis unreliable. Cleaning involves removing irrelevant text, standardizing formats, and validating fields so that the dataset remains internally consistent.

Structuring

After cleaning, the data needs to be organized into a clear schema. This means defining standardized fields such as:

- product_id

- product_name

- marketplace

- seller_name

- price

- rating

- review_count

Structuring is the step that transforms web scraping data from scattered text fragments into a dataset that analytics tools can process. When done properly, structured datasets allow analysts to compare information across marketplaces, categories, or time periods.

Storage

Reliable scraping systems also need long-term storage strategies. Data may be stored in:

- Relational databases

- Data warehouses

- Cloud storage platforms

- Analytics pipelines

Storage decisions matter because historical datasets often become more valuable than real-time snapshots. Tracking price changes, seller behavior, or product rankings over time allows businesses to detect trends that would otherwise remain invisible.

Analysis

The final stage of the lifecycle is where web scraping data begins to deliver real business value.

Once data is structured and stored, it can be integrated into dashboards, pricing engines, or forecasting models. Analysts can identify pricing gaps, monitor competitors, and evaluate marketplace dynamics with far greater accuracy than manual observation allows.

In other words, the true purpose of scraping is not data collection itself; it is decision support.

How Web Scraping Data Differs from API Data

Many modern platforms provide official APIs (designed to expose structured endpoints for developers), which raises an important question: why collect web scraping data if APIs already exist? The answer lies in coverage and control.

| Aspect | Web Scraping Data | API Data |

| Access | Public webpage content | Platform-controlled access |

| Data scope | Often broader | Limited to API endpoints |

| Flexibility | Highly customizable | Fixed structure |

| Rate limits | Managed by scraper architecture | Strict platform limits |

| Availability | Works even without API access | Only if the API exists |

APIs are extremely useful when available. They provide stable structures and predictable responses. However, many marketplaces restrict their APIs or expose only partial datasets. Critical information such as seller listings, search rankings, or full product catalogs may not be available through official endpoints.

Scraping fills that gap by capturing what users actually see on the website. For market monitoring and competitor analysis, that perspective is often more practical than relying solely on API access.

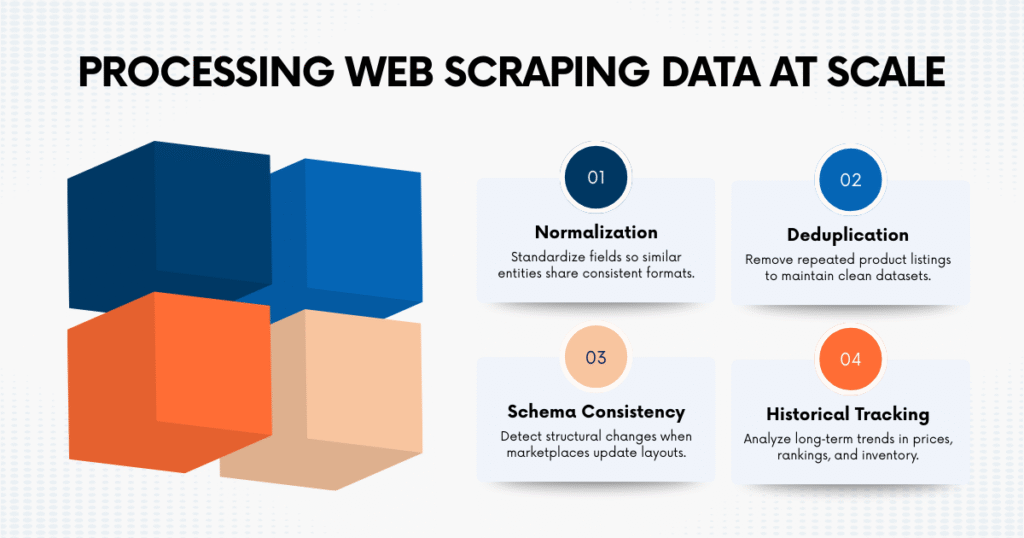

Processing Web Scraping Data at Scale

Small scraping projects can sometimes operate with minimal processing. But once datasets grow to thousands or millions of records, maintaining data quality becomes a major technical challenge. Organizations that rely heavily on web scraping data typically implement additional processing layers to maintain reliability.

Normalization

Normalization ensures that similar data fields follow consistent formats across sources. For example, seller names may appear slightly differently across listings:

- “Tech Store Official”

- “TechStore Official Shop”

- “TECH STORE”

Normalization rules help consolidate these variations so the system recognizes them as the same entity.

Deduplication

Large marketplaces often contain duplicate product listings or mirrored catalog entries. Without deduplication logic, scraping systems may record the same product multiple times, inflating dataset size and distorting analytical results.

Deduplication methods usually rely on combinations of product IDs, URLs, or similarity algorithms to identify repeated records.

Schema Consistency

When websites update their layouts, the structure of extracted fields can change unexpectedly. For instance, a product page might move the price element into a new HTML container during a promotional campaign.

Maintaining schema consistency means continuously validating that fields still match the expected structure. Mature scraping pipelines include monitoring systems that detect these changes early.

Historical Tracking

One of the biggest advantages of web scraping data is the ability to build historical datasets. Tracking how prices, rankings, or inventory levels change over time allows analysts to detect patterns such as:

- seasonal pricing cycles

- competitor discount strategies

- emerging product categories

Historical tracking transforms raw scraping outputs into strategic intelligence.

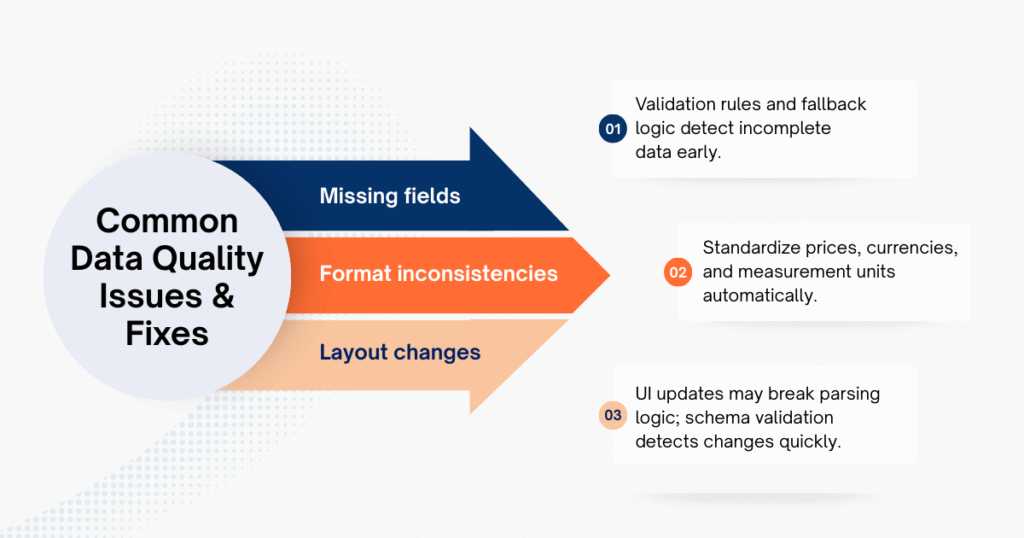

Common Data Quality Issues & Fixes

Even well-designed scraping systems encounter data quality challenges. The most common problems tend to fall into a few predictable categories.

- Missing fields: When pages lack certain attributes or parsing rules fail. Validation rules and fallback logic help detect gaps early.

- Format inconsistencies: Particularly common with prices, currencies, or measurement units. Automated standardization keeps datasets comparable.

- Layout changes: Marketplace UI updates may break parsing logic. Continuous monitoring and schema validation reduce this risk.

Experienced web scraping experts address these risks through automated alerts, schema validation, and continuous quality checks. Over time, these safeguards become just as important as the extraction process itself.

Real Business Applications in E-commerce

When managed properly, web scraping data becomes a foundation for multiple ecommerce strategies.

- Competitive price monitoring: Retailers track competitor listings and adjust pricing dynamically.

- Marketplace assortment analysis: Scraping product catalogs reveals gaps in inventory or emerging product niches.

- Customer feedback analysis: Review datasets highlight recurring product issues and customer sentiment trends.

- Category trend detection: Tracking new listings over time helps identify growing product segments earlier.

Companies that operate across Southeast Asian marketplaces often use scraping datasets to maintain a unified view of their competitive landscape. For organizations that rely heavily on these insights, building a reliable ecommerce data scraping pipeline becomes a long-term investment rather than a temporary technical experiment.

Many teams choose to work with specialized providers such as Easy Data, especially when monitoring multiple platforms simultaneously. Structured datasets from providers’ Lazada, TikTok Shop, and Shopee data scraping services enable businesses to track pricing changes, seller behavior, and category movements across marketplaces without maintaining complex scraping infrastructure internally.

Final Thoughts

Access to reliable external data is becoming a competitive requirement in modern e-commerce. Web scraping data provides a practical way to transform publicly available website information into structured datasets that support pricing strategies, market research, and operational planning.

However, successful scraping initiatives rarely depend on extraction alone. The real challenge lies in maintaining data quality, processing large datasets, and ensuring long-term reliability as websites evolve. When organizations treat scraping as part of their broader data infrastructure, the resulting datasets become far more valuable.

Leave a Reply