Introduction

The demand for web scraping freelance services has been rising steadily, making it a lucrative field for tech-savvy individuals. With businesses increasingly relying on data-driven decisions, freelancers specializing in web scraping are in high demand. Whether you want to become a freelancer or hire one, this guide will cover everything you need to know, from essential tools to legal considerations.

What is Web Scraping?

Web scraping is the process of extracting data from websites using automated scripts or software. This data can be used for various purposes, such as price monitoring, competitor analysis, market research, and lead generation.

Common Use Cases of Web Scraping

- E-commerce: Tracking competitor prices and analyzing market trends.

- SEO & Digital Marketing: Gathering data for keyword research and content strategies.

- Real Estate & Job Market: Aggregating listings for real estate platforms or job boards.

- Academic & Business Research: Collecting large datasets for analysis.

Why Choose Freelancing in Web Scraping?

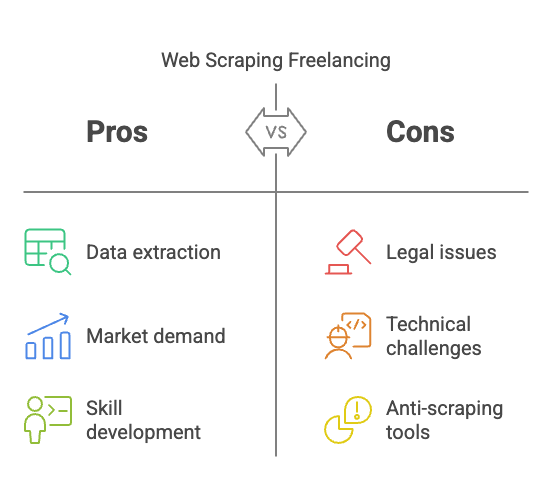

Benefits of Becoming a Web Scraping Freelancer

- High Demand: Companies need data insights, making scraping a valuable skill.

- Flexible Work Hours: You can work remotely and set your own schedule.

- Lucrative Earnings: Experienced web scrapers can charge premium rates.

- Diverse Projects: Work with different industries, from finance to e-commerce.

Challenges of Web Scraping Freelancing

- Legal & Ethical Concerns: Some websites prohibit scraping, requiring legal compliance.

- Technical Complexity: Scraping dynamic or JavaScript-heavy sites can be challenging.

- Anti-Scraping Mechanisms: Websites employ measures like CAPTCHA to prevent bots.

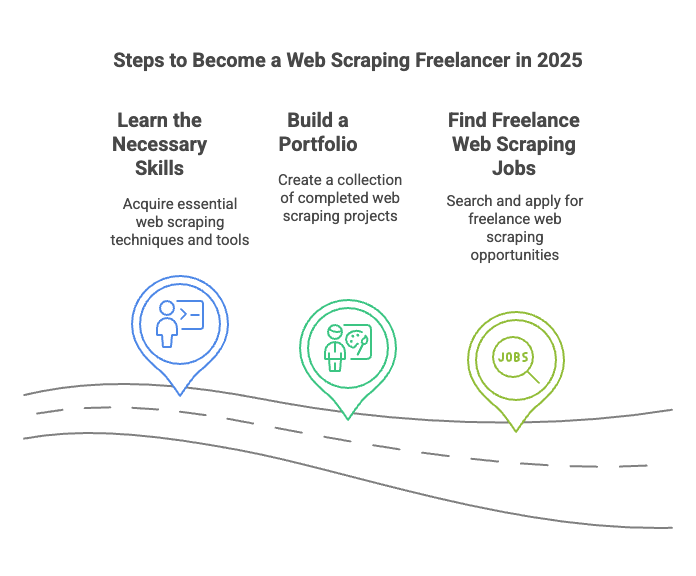

How to Become a Web Scraping Freelancer in 2025

1. Learn the Necessary Skills

To become a successful web scraping freelance expert, you need proficiency in:

- Programming Languages: Python (BeautifulSoup, Scrapy), JavaScript (Puppeteer, Selenium).

- APIs & Data Extraction: REST APIs, JSON, and XML parsing.

- Data Storage & Processing: SQL, NoSQL databases, and cloud storage solutions.

- Proxy & Bypassing Techniques: Handling CAPTCHA, rotating IPs, and headless browsing.

2. Build a Portfolio

Showcase your skills by creating scraping projects:

- Personal projects: Scrape and analyze publicly available datasets.

- GitHub repositories: Share your scripts and solutions.

- Blog writing: Publish articles on your scraping methods and case studies.

3. Find Freelance Web Scraping Jobs

Several platforms offer freelance web scraping gigs, including:

- Upwork – One of the largest freelancer marketplaces.

- Freelancer – Hosts web scraping-related projects.

- Toptal – Ideal for experienced freelancers.

- PeoplePerHour – Another great option for tech professionals.

Alternatively, you can check Easy Data for potential web scraping job opportunities and industry insights.

How to Hire a Web Scraping Freelancer

Key Factors to Consider

If you’re looking to hire a web scraping freelancer, consider the following:

- Experience & Portfolio: Review past work samples and technical expertise.

- Technical Skills: Ensure they have proficiency in Python, APIs, and databases.

- Understanding of Legal Compliance: Avoid scrapers who use unethical methods.

- Communication & Reliability: They should provide clear updates and meet deadlines.

Where to Find Freelancers

- Freelance Marketplaces: Upwork, Freelancer, and Fiverr.

- Web Scraping Agencies: Companies like Easy Data offer professional scraping services.

- LinkedIn & GitHub: Directly reach out to experts in the field.

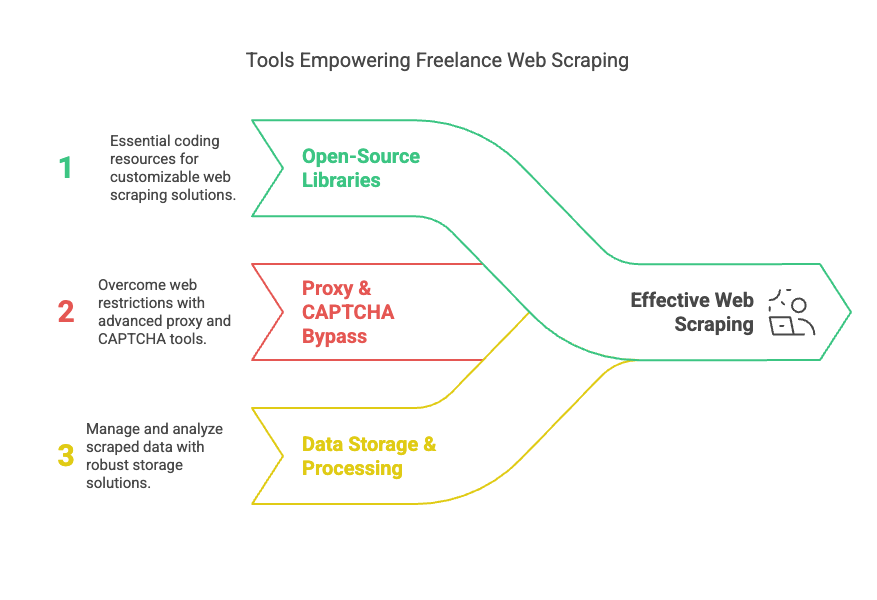

Best Web Scraping Tools for Freelancers

Here are some essential tools for web scraping professionals:

Open-Source Libraries

- BeautifulSoup: Ideal for simple HTML parsing.

- Scrapy: A powerful framework for large-scale scraping projects.

- Selenium: Best for JavaScript-rendered websites.

- Puppeteer: A headless browser for handling complex scraping tasks.

Proxy & CAPTCHA Bypass Services

- Bright Data (formerly Luminati) – Premium proxy service.

- ScraperAPI – Handles CAPTCHA and IP rotation.

- 2Captcha – A service for solving CAPTCHA challenges.

Data Storage & Processing Tools

- MongoDB – NoSQL database for handling large datasets.

- PostgreSQL – Popular relational database.

- AWS S3 & Google Cloud Storage – Cloud-based storage solutions.

Legal and Ethical Considerations

While web scraping is powerful, it must be done ethically and legally.

Understanding Legal Risks

- Terms of Service (ToS): Many websites prohibit scraping in their ToS.

- Robots.txt: Some sites specify what can and cannot be scraped.

- GDPR & CCPA Compliance: Avoid scraping personal data without consent.

Ethical Web Scraping Practices

- Respect Website Policies: Follow robots.txt guidelines.

- Avoid Overloading Servers: Use rate limiting to prevent disruption.

- Use Official APIs When Available: This ensures data is legally obtained.

Conclusion

Web scraping freelance work in 2025 offers immense opportunities for skilled professionals. Whether you’re looking to build a career as a freelancer or hire one, understanding the tools, techniques, and legalities will set you up for success. For more insights and resources, visit Easy Data.

Leave a Reply